Thesis

Semantic Space proposes that navigation and creation can be the same gesture. Most digital spaces separate these acts: you scroll to explore, you post to create. Here, every movement requires generation. To see what's nearby, you must make something that belongs nearby. To reach a distant post, you must generate a path toward it, step by step, through semantic territories you can barely see. The system treats meaning as having geometry—prompts exist as coordinates in conceptual space, and the map makes this geometry traversable. Distance isn't arbitrary; it's computed from embedding similarity, so "foggy mountain forest" genuinely sits closer to "misty valley" than to "neon cityscape." By tying movement to creation and making distant posts literally blurry and harder to navigate toward, the project argues that exploration should require effort proportional to distance, that agency and constraint can coexist, and that spatial interfaces can make the structure of semantic relationships tangible and walkable. The space resets on refresh because positions must stay honest to current embeddings—ephemerality isn't a limitation, it's a commitment to computational truth. This is a medium for people who want to traverse conceptual landscapes by generating their way across them, accepting that the far horizon stays uncertain until you walk there yourself.

Concept

Navigation Through Creation

Semantic Space rejects the feed. Instead of scrolling through a linear timeline curated by algorithms, users navigate a two-dimensional map where every step forward requires making something new. The system asks: what if exploration and creation were the same act? What if to see what's over there, you first had to imagine something that bridges here and there?

This isn't a gallery—it's a traversal system. Images aren't endpoints to be liked or collected; they're coordinates in semantic space, waypoints in an ongoing journey. The map reorganizes around you with every move. There is no global view, no minimap, no overview. You see only what's immediately near you, and to move, you must generate.

Ephemerality and Honest Positions

Nothing persists. Refresh the page and the space resets—only the 20 gradient placeholder posts remain, hand-placed in their fixed positions. Every generated post, every navigation step, every populated seed: gone.

This is by design. Semantic positions are computed from embeddings. If posts persisted across sessions, their coordinates would be based on yesterday's UMAP calculation, which ran on yesterday's dataset. As new posts were added, old positions would become stale—anchored to embeddings that no longer reflect the current map's geometry.

By resetting on refresh, positions stay honest. Every session's UMAP calculation runs on only the posts that exist right now, in this session. If you generate 30 images, UMAP places them based on their relationships to each other, not to ghosts from prior sessions. The space is always internally consistent.

This also makes the project inherently exploratory. You can't build a permanent collection. You can't archive your path. The space is a session-based experience—you wander for 20 minutes, an hour, and when you leave, that journey is over. The next session is a fresh map.

The Semantic Map

Every prompt carries meaning, and meaning has geometry. When you write "foggy mountain forest at dawn," that phrase exists as a point in conceptual space—close to "misty valley," distant from "neon cityscape." The system makes this geometry visible.

Each generated image gets an embedding—a 1024-dimensional mathematical representation of its prompt's meaning. UMAP compresses these into 2D coordinates. Similar concepts cluster; distant concepts separate. The result is a landscape where thematic territories emerge organically: nature in one region, urban scenes elsewhere, abstraction at the edges, portraiture in its own zone.

You are always at the center. The world doesn't scroll past you—you move through it, and it reconfigures around your position. Every time you post an image, your anchor shifts to its location, and the ring system recalculates to show you the nearest posts from your new vantage point.

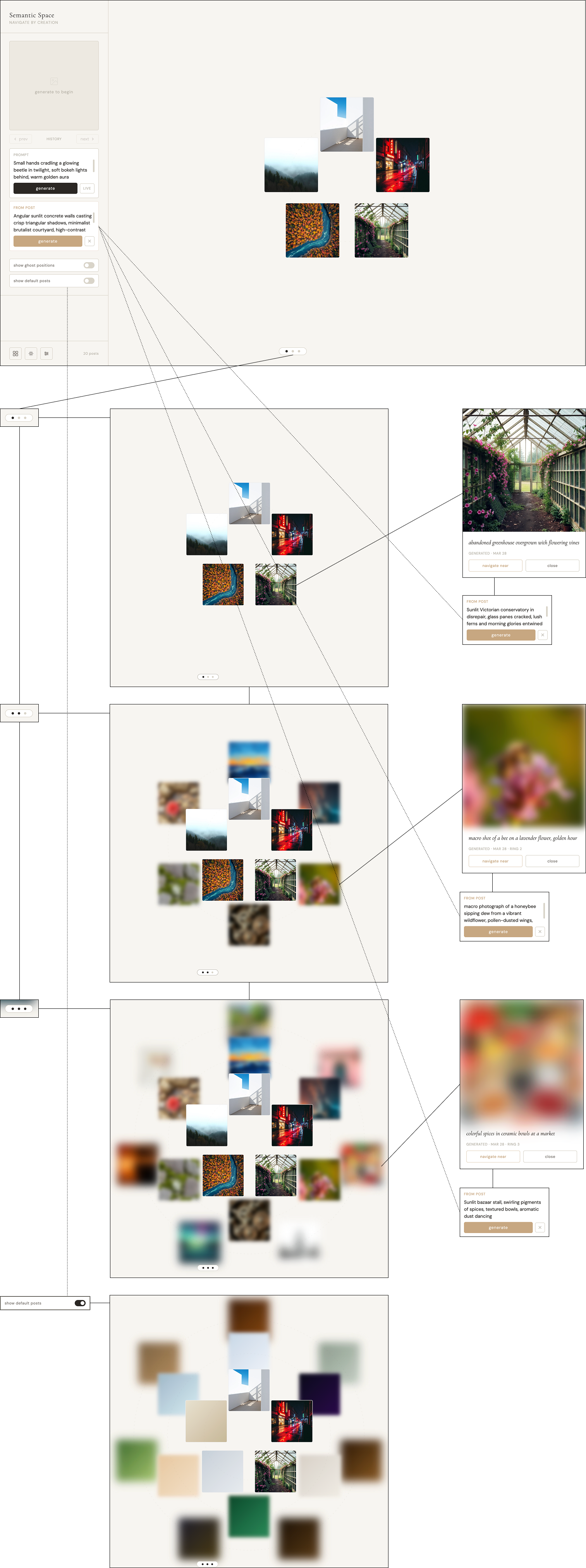

Distance as Degradation

The system treats distance honestly. In physical space, faraway objects blur, details disappear, certainty decreases. Semantic Space mirrors this: the further a post is from you in UMAP coordinates, the less clearly you can see it, and the less precisely you can navigate toward it.

This isn't a metaphor—it's a functional constraint. When you're in Stage 3 and click a heavily blurred post at the horizon, the reverse-engineering LLM only receives the first two words of that post's original prompt. It's instructed to be "impressionistic and exploratory." You're not getting directions—you're getting a vague gesture in a general direction. Sometimes you land where you expected. Often you don't.

Visual / Prompt Dehancement

What This Enables

Semantic drift journeys: Start with "foggy mountain forest," navigate through progressively urban landscapes, end at "rainy neon streets" 30 steps later

Theme deep-dives: Stay in Stage 1, iterate within a single semantic cluster, developing 20 variations on minimalist still life

Horizon-chasing: Set Stage 3, identify a heavily blurred post that looks intriguing by color/composition alone, navigate toward it through multiple intermediate steps, see where you land

Collaborative territory-building: Admin-populate creates a shared landscape; multiple users exploring the same session would see the same map and could theoretically coordinate to meet at specific semantic coordinates (though the current implementation doesn't support multi-user)

What This Rejects

Permanence: No save, no history, no archive. The journey is the experience.

Curation: No likes, no follows, no recommendations. The space doesn't judge content.

Efficiency: Navigation is deliberately slow. Getting somewhere distant takes real effort.

Omniscience: No global view, no minimap. You only see your local neighborhood.

Design Focus

The Ring System as Constrained Visibility

Traditional infinite canvases let you zoom out infinitely, pan anywhere, see the whole map. Semantic Space deliberately constrains this. You can't zoom out to see the global structure. You can't drag freely across the space. You have three zoom stages, each revealing only the posts immediately around you.

This constraint is the core mechanic. You're forced to navigate locally. You can't click on a post across the map because you can't see it. You can only see what's within three rings of where you currently are. To reach a distant post, you have to navigate step-by-step, clicking posts at your visible edge and re-generating to move incrementally in that direction.

Agency and Constraint in Equal Measure

Semantic Space gives you full creative control (you write the prompts) while constraining your movement (you can only go where embeddings allow). You can't teleport. You can't jump to arbitrary coordinates. You're bound by the geometry of meaning—if you're in "foggy forests" and want to reach "cyberpunk cityscapes," you have to navigate across the map, and that takes steps.

This creates an unusual relationship with the AI. You're not prompting it to generate images you want—you're using it as a navigation tool to move through semantic territories. The prompts you write are more like compass bearings than creative expressions. You're asking: "From here, which direction takes me closer to there?"

The system doesn't curate content for you. It doesn't recommend posts. It doesn't show you "popular" or "trending." It shows you the mathematical nearest neighbors to wherever you currently are. The space is neutral—it doesn't care what you're looking for. It just shows you what's nearby.

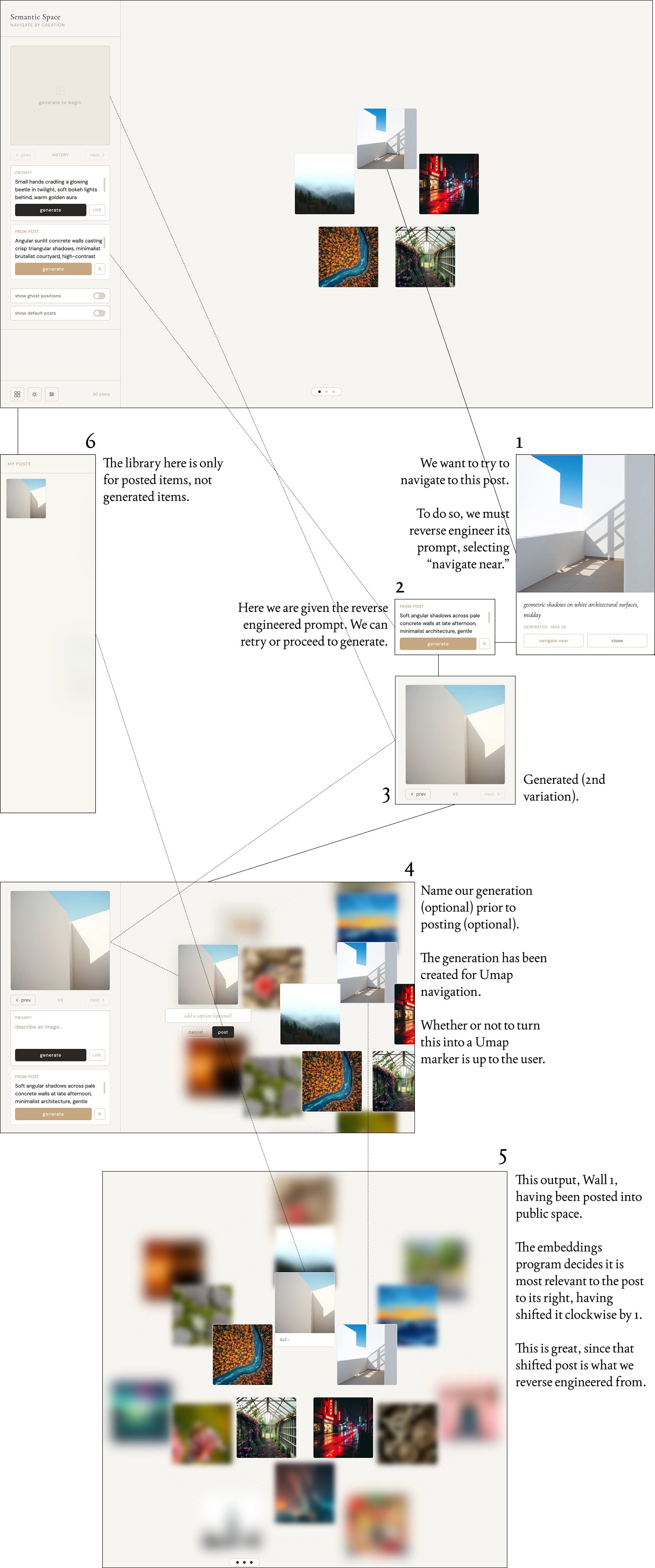

Reverse Engineering as Navigation

You don't navigate by clicking and dragging. You navigate by interpreting what you see, asking the system to reverse-engineer a related prompt, then generating from that prompt and posting. This multi-step process is deliberate friction.

When you click "use as starting point," the LLM looks at the original post's prompt and generates something adjacent. It doesn't copy—it varies. If the original was "neon-lit rainy street at night," you might get "wet pavement reflecting colored light, urban evening, cinematic." The new prompt is recognizably related but distinct.

This means every navigation step is a mutation. You're not teleporting to the post you clicked—you're generating something inspired by it, and that new image lands near (but not on) the original. Over multiple steps, you drift. The path from A to B is never a straight line because each step introduces variation.

The mutation compounds with distance. Ring 1 mutations are small—tight semantic variations. Ring 3 mutations are large—loose interpretations based on minimal information. Navigating toward a distant blurred post means accepting that you'll land somewhere in that general direction, but the exact destination is unknown.

Why This Structure?

The ring system forces you to explore incrementally. You can't skip to the interesting parts because you don't know where the interesting parts are until you get there. The blur forces you to navigate with imperfect information, making exploration genuinely uncertain.

By tying navigation to generation, the system ensures you're always creating, not just consuming. Every move requires making something. This is slower, more deliberate, more effortful than scrolling—and that's the point. It's a spatial medium for people who want to explore conceptual territories by walking through them, not flying over them.

Interaction Diagram

Ring 1 / Stage 1: Precision

Visual Layout

A single ring of up to 5 posts arranged in a circle around you. Ring radius is approximately 32% of the viewport's smaller dimension, ensuring posts stay comfortably visible without reaching screen edges. Posts appear at full size (140×140px) with no blur or degradation.

Post Selection

The system finds your 5 nearest neighbors in UMAP space — posts whose embedding vectors have the highest cosine similarity to your current anchor position. These are distributed evenly around the ring at equal angular intervals (72° apart), starting from the top. If fewer than 5 posts exist in the space, empty slots remain unfilled.

Reverse Engineering Behavior

The LLM receives the complete prompt and caption from the original post. Example flow:

Original post: "dense fog over mountain forest, early morning light filtering through"LLM instruction: "Generate a closely related but distinct prompt" Output: "misty pine valley at dawn, soft rays breaking through canopy, serene atmosphere"

The result stays semantically tight to the source — variations on the same theme, exploring details or perspectives within the same conceptual territory. Precision mode is for thematic iteration — when you want to stay in a specific semantic neighborhood and develop variations.

Ring 2 / Stage 2: Exploration

Visual Layout

Two concentric rings around you:

Inner ring (Ring 1): Up to 5 posts at ~32% viewport radius, sharp and clear

Outer ring (Ring 2): Up to 6 posts at ~58% viewport radius, visibly blurred

The outer ring sits roughly 1.8× the distance of the inner ring, creating clear visual separation. Posts in both rings are distributed evenly within their respective circles.

Post Selection

The system ranks all posts by UMAP distance from your anchor, then allocates:

Closest 5 → Inner ring

Next 6 closest → Outer ring

If fewer than 11 posts exist, outer ring slots may be partially empty. Inner ring always fills first.

Reverse Engineering Behavior

Behavior splits by ring:

Inner ring (Ring 1): Full fidelity, same as Stage 1

Original: "dense fog over mountain forest, early morning light filtering through"

LLM receives: Full prompt

Output: "misty pine valley at dawn, soft rays breaking through canopy"Outer ring (Ring 2): Partial degradation

Original: "dense fog over mountain forest, early morning light filtering through"

LLM receives: "dense fog over mountain forest..." (first half only)

LLM instruction: "Given vague partial description, generate a loosely related prompt. Be somewhat approximate and exploratory."

Output: "foggy landscape with scattered trees, muted natural tones, atmospheric depth"The outer ring prompt loses specifics ("mountain," "early morning," "filtering through") and becomes more interpretive. The LLM is instructed to drift rather than stay precise.

Visual Clarity

Inner ring posts remain at full clarity (0px blur). Outer ring posts receive:

6px blur on canvas (enough to obscure fine details but maintain recognizable shapes and colors)

9px blur in the detail modal when opened (more pronounced degradation)

Captions still readable but slightly softened

The blur is applied via CSS filter: blur() to both the card thumbnail and the modal image.

Ring 3 / Stage 3: Horizon

Visual Layout

Three concentric rings:

Ring 1 (inner): Up to 5 posts at ~32% radius, sharp

Ring 2 (middle): Up to 6 posts at ~58% radius, medium blur

Ring 3 (outer): Up to 7 posts at ~82% radius, heavy blur

The outermost ring approaches the screen edges, creating a sense of reaching toward the horizon. Posts at this distance are barely identifiable — you see color palettes and rough shapes but not details.

Post Selection

All posts ranked by distance, then allocated:

Closest 5 → Ring 1

Next 6 → Ring 2

Next 7 → Ring 3

Maximum visible posts: 18. If the space has fewer than 18 posts, outer rings have empty slots.

Reverse Engineering Behavior

Ring 3: Minimal hint (first two words only)

Original: "dense fog over mountain forest, early morning light filtering through"

LLM receives: "dense fog..."

LLM instruction: "Given only a vague hint, generate a loosely inspired prompt. Be impressionistic and exploratory."

Output: "obscured atmosphere, soft gradients, minimal visibility, abstract weather"The outermost ring produces prompts that are thematically adjacent but not literally related. The LLM has almost no information and is told to interpret freely. You might get weather, you might get mood, you might get abstract visual qualities — but you won't get a faithful variation.

Visual Clarity

Ring 1: 0px blur (sharp)

Ring 2: 6px blur (softened)

Ring 3: 14px blur on canvas, 21px blur in modal (heavily degraded — you can make out general composition and color but not subject matter)

Ring 3 blur is extreme enough that clicking a post feels like a gamble — you're navigating by impression, not information.

Functional Mechanics

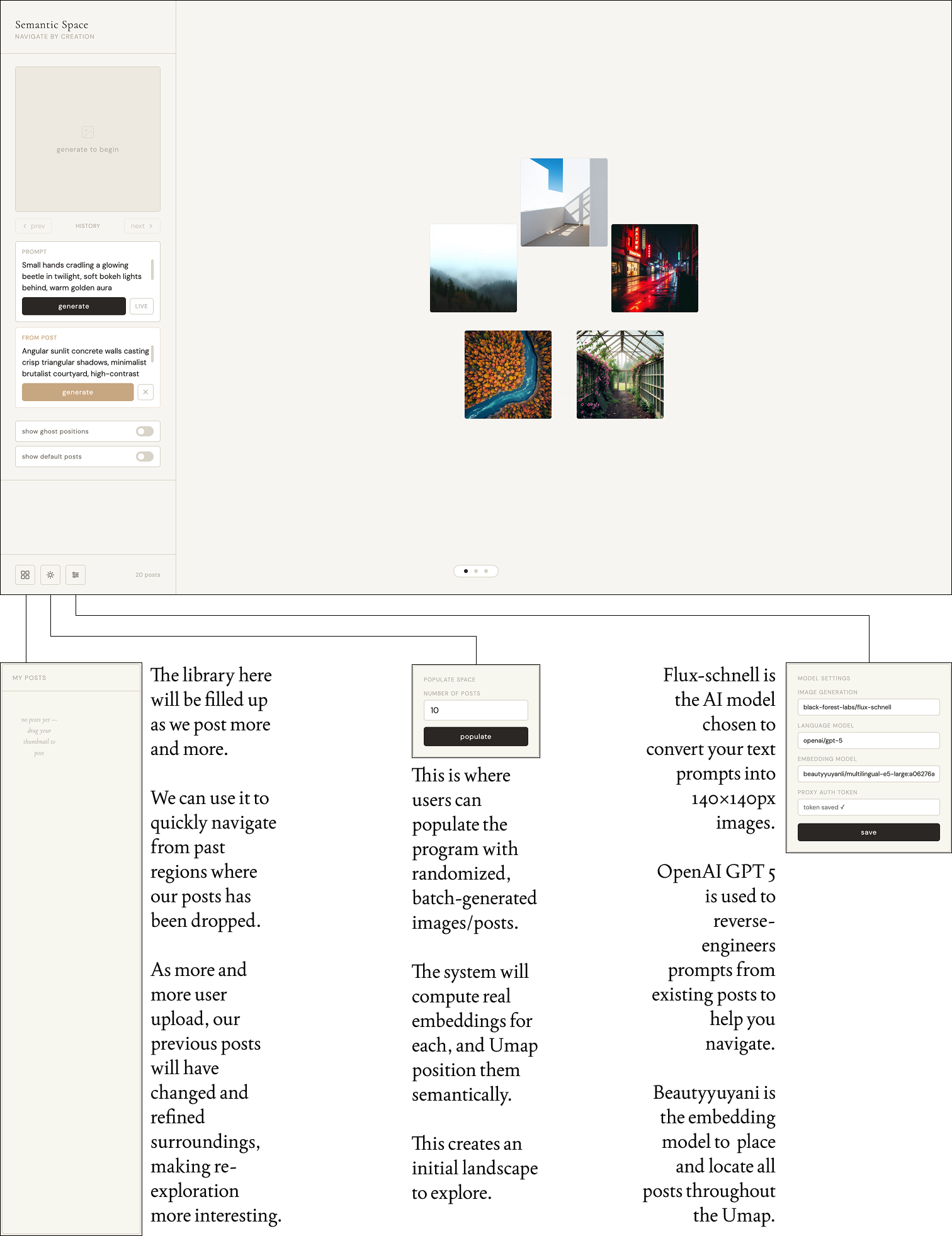

Image Generation

Uses Flux Schnell via Replicate proxy. Prompts are converted to 140×140px images stored as base64 data URLs in browser memory.

Semantic Embedding

Each prompt is encoded into a 1024-dimensional vector using a multilingual sentence embedding model. These vectors capture semantic meaning — prompts about similar subjects produce similar vectors.

Spatial Positioning

UMAP (Uniform Manifold Approximation and Projection) reduces embedding vectors from 1024 dimensions to 2D coordinates. Posts with similar embeddings cluster together in visual space. When fewer than 4 posts exist, new posts are placed near their semantically nearest neighbor with jitter to prevent stacking.

Admin Populate

Batch-generates posts from a curated seed prompt list, each with real remote embeddings. UMAP runs on the full set (when 4+ posts) to position them semantically. All posts use the same embedding type to ensure valid similarity comparisons.

Default Posts

20 gradient placeholder posts load on every session with hand-placed UMAP coordinates and pre-computed local embeddings. These act as visual scaffolding but don't interfere with semantic placement of real generated posts. Can be toggled on/off.

Ring-Based Discovery

Posts are organized into concentric rings based on UMAP distance from the user's current anchor position:

Stage 1: One ring (5 posts max), sharp, full reverse-engineering fidelity

Stage 2: Two rings (5 + 6 posts), outer ring blurred at 6px, reverse-engineering uses partial prompts

Stage 3: Three rings (5 + 6 + 7 posts), progressive blur at 6px and 14px, reverse-engineering receives only vague hints

Posts are evenly distributed around each ring on screen, while their internal UMAP positions remain unchanged.

Reverse Engineering

An LLM analyzes an existing post's prompt and generates a related but distinct prompt. Ring distance affects both visual clarity (CSS blur) and prompt quality:

Ring 1: Full prompt → precise variations

Ring 2: First half of prompt → exploratory variations

Ring 3: First two words → impressionistic interpretations

No Persistence

Posts reset on every refresh. Only model settings and auth token persist. This ensures spatial positions are always honestly computed from current session embeddings, never stale cached data.

Control Settings